Large language models and the Zettelkasten method

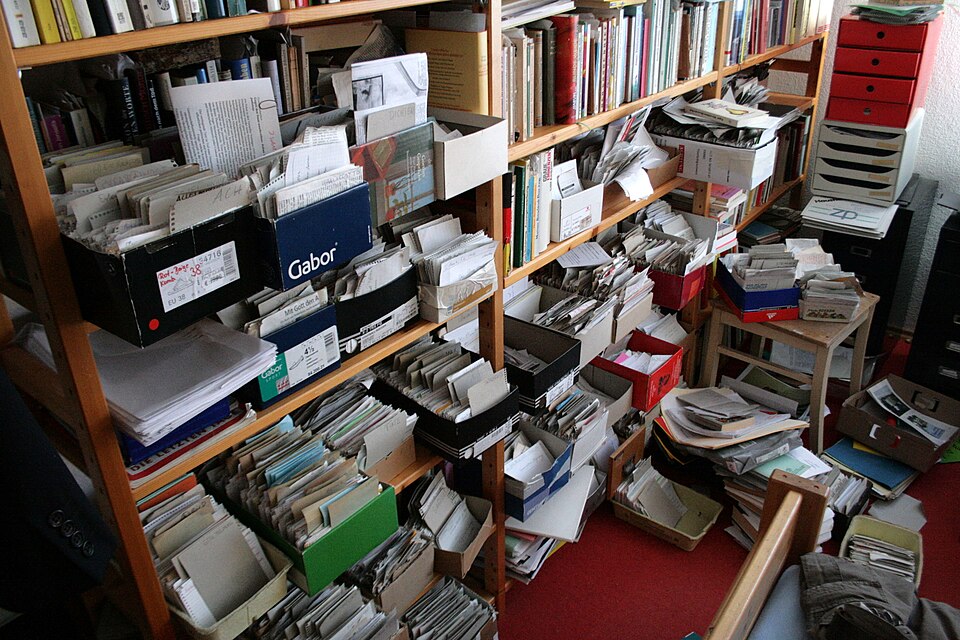

Recently I’ve been looking at two very different models of knowledge linking. The first, the Zettelkasten method, is as human as it gets. I think of it (and remember I’m a novice where it’s concerned so this may not be a useful thought) as like making an internet of your brain. Taking snippets of knowledge, expressing them personally and storing them in such a way as to be linkable. No quoting or text generation is allowed. Each note must be in your own words, and tagged so it can be cross-referenced. An example of one of mine is below.

#+title: Information theory

#+date: [2025-12-30 Tue 15:15]

#+filetags: :eventstreaming:mathematics:

#+identifier: 20251230T151502

Information theory is a branch of mathematics that studies the quantification, storage and communication of information. It predates computing but is where computing draws terms such as producer (the sender of a set of information) and consumer (the receiver of a piece of information). [fn:1]

This is particularly salient in event-driven computer architecture. (See [[denote:20251230T114821][Purpose of event streaming]])

[fn:1] James Urquhart (2021): Flow Architectures, Chapter 1; O'ReillyNaturally I use Emacs for this process. I find it calming and can imagine it being a way I thoroughly enjoyed working were I to live in a different society.

But the society I in fact live in has meant I’m making much more progress using opencode. Large language models are the reverse of the Zettelkasten method in that they try to take the internet and make it a brain. Opencode offers an excellent way to explore working with agentic AI because it offers several very capable free models, and it’s also trivially easy to integrate it with Ollama where you can use free cloud models for even more free tokens. Ollama is more associated with local models but in my experience these aren’t yet useful—certainly not on my hardware.

Getting things done

Even using free models—I have no intention of ever paying for AI access—I’ve found I can achieve a lot. So far the only coding tasks I’ve set opencode loose on were updating my nixos config to use home manager and updating this blog to use more recent versions of its libraries and add some test coverage. These are both tasks I’ve put off for years because the cost/benefit in my free time didn’t stack up. I used the standard opencode Build agent for my config, but in the process I created an opencode config including the crew of Deep Space 9 as custom agents to accomplish the second and any subsequent tasks.

This was more than just whimsy. Giving the agents these personas achieves two useful ends for me. Firstly I enjoy it, so it makes work feel less like a chore. Secondly it forces me to direct the LLM in ways that work well with such models. They are word prediction machines, and so trying to treat them like a compiler, in my experience, leads to more frustrating work than does treating them like a Star Trek captain might direct their crew. In fact if you watch the best Star Trek series (subjective but for me that means Deep Space 9, Next Generation and Voyager) then how the crew direct the ship/station computer seems to me to also be an excellent model for interacting with an LLM.

Different kinds of satisfaction

Using LLMs doesn’t give me the same feeling of satisfaction as does growing my Zettelkasten, but I found giving the agents personalities at least gave me a feeling of fun while doing some work I wouldn’t otherwise have done. This for me is the big win in agentic AI as things stand today. With it I can focus on what I want without really paying the opportunity cost of not adding features or paying down tech debt, because the agents can do that for me while I focus on the real work.

Growing my Zettelkasten, on the other hand, makes me feel like I can become more effective as a person over time. I mentioned earlier that I’d need society to be different for this to be the main way I work and I mean it. Had I no bills to pay and no family responsibilities I could happily spend my days growing my knowledge and looking for interesting ways to apply it. But Star Trek’s post-scarcity society isn’t with us yet, and until it is I’d bet on the LLMs to help us get there more than a million Zettelkasten.